Making Patent Data AI-Ready: Stop Feeding the Model Ambiguity

by Kent Richardson

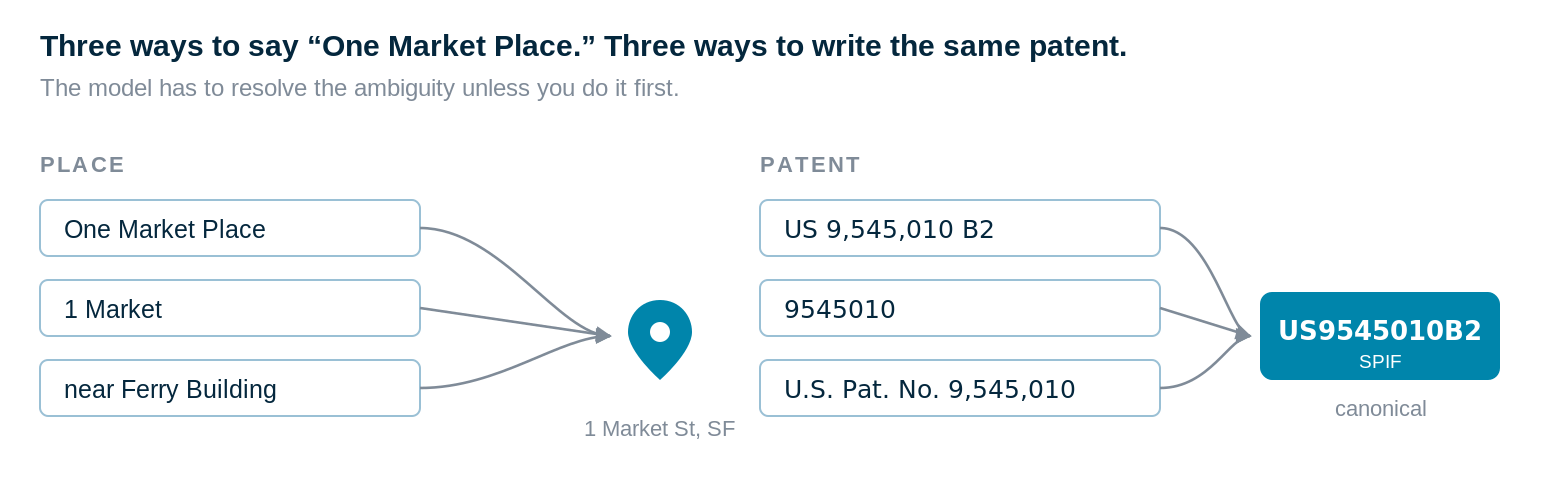

Ask an AI for things to do near "One Market Place" and you'll get nothing useful — wrong city, wrong country, no context. Add "in San Francisco" and it works. But if you then call it "1 Market" or "the place next to the Ferry Building," the model has to resolve the ambiguity itself. Sometimes it does. Sometimes it doesn't.

That's what we do to AI tools every day with patent identifiers.

We built a platform to run thousands of patents through prompts. One thing we learned quickly: ask the model to do too much and you get garbage mixed into the results. So we constrain what the model handles at any one time. A simple piece of that is not feeding it bad patent identifiers. Small fix. Compounds across tens of thousands of prompts.

SPIF was launched to solve a deceptively simple problem: knowing which patent you actually mean. We worked with Google, RPX, Cipher, and Unified Patents to standardize the format. The spec is published, and you can download SPIF-formatted patent lists from Google Patents and Cipher.

The AI issue

To a human — and to many systems — these all look the same:

US 9,545,010 B2 — ad hoc, with countless variations

US-9545010-B2 — hyphenated

US9545010B2 — SPIF

The third is easier for a human to select and copy cleanly. Now, try double-clicking the first one; that is not a great user experience, especially when you might do it dozens of times. For machines, and especially for LLMs, the difference is bigger than that.

SPIF also saves tokens, though that's the lesser benefit:

US 9,545,010 B2 → ~10 tokens

US-9545010-B2 → ~7 tokens

US9545010B2 → ~6 tokens

The point isn't tokens. SPIF gives the model a canonical key. Patent numbers are not prose; they are identifiers. Inside a reasoning model, an identifier is useful only if it behaves like a stable pointer. The moment the same asset shows up in multiple formats, one reference becomes several surface forms, and the system has to normalize before it can reason.

SPIF is a canonical schema. It separates application numbers from publication numbers, enforces consistent formatting, and standardizes enough that tools stop guessing which patent you meant.

Why this matters for AI

Most non-standardized formats fail in ways that are fatal for machines and only annoying for humans. Humans clean up messy formats in their heads. Models can too — but you are then spending context, attention, and error budget on cleanup instead of analysis.

Ad hoc patent IDs introduce real ambiguity: missing country codes, dropped leading zeros, wrong kind codes, strings that could be either an application or a publication. Retrieval gets brittle. Reasoning wanders. Production systems end up quietly wrong.

From an AI-tool perspective:

A structured SPIF row is best

A hyphenated string is acceptable

A human-formatted string with commas and spaces is actively harmful

The model can handle all three. That does not mean you should give it all three.

SPIF also provides something the bare string does not: redundancy. Title, filing date, optional family identifiers — fields that help verify identity. That redundancy is what AI workflows actually need: less uncertainty about which patent you meant.

So which format works best inside context windows and reasoning models? SPIF — because it reduces surface-form entropy and makes identifiers behave like stable pointers. When your IDs are inconsistent, you are asking the model to resolve "One Market Place," "1 Market," and "the place next to the Ferry Building" at scale.

If you are feeding patent data into AI tools, format the list in SPIF before it touches the model. The cleanup you skip on the input side is cleanup the model will not have to do — usually badly — on the output side. You can learn more about SPIF here.